Perplexity Just Built an AI That Manages Other AIs. Here's What That Means for You.

By Riz Pabani on 01-Mar-2026

Perplexity launched a product called Computer last week. It's a managed AI agent that breaks your request into subtasks, assigns each one to a different AI model, and delivers a finished result.

You say "build me a website" or "prepare a research report." Computer figures out the steps, picks the right model for each step, and runs the whole thing in the cloud. It coordinates 19 models — Claude Opus 4.6 for reasoning, Gemini for research, Grok for quick tasks, GPT-5.2 for long-context work, Nano Banana for images, Veo 3.1 for video.

It costs $200 a month on their Max tier. That's the headline. Here's what actually matters.

This Isn't a Chatbot Anymore

Computer doesn't work like a chat window. You describe an outcome and walk away. It plans the work, runs sub-agents in parallel, connects to your Gmail or Slack or Notion, and checks in when it needs a decision. It can run for hours or months without you touching it.

It's closer to hiring a freelancer on Upwork. Except the freelancer has access to 19 specialists and never sleeps.

CEO Aravind Srinivas positioned it explicitly against OpenClaw. Where OpenClaw runs locally on your machine with broad access to everything, Computer runs in Perplexity's cloud inside a locked-down environment. The pitch is: same ambition, less risk.

The Part Most People Will Miss

The 19-model thing sounds impressive. It is, technically. But the interesting bit isn't the number of models. It's the pattern.

Computer decides which model to use for each subtask. You don't. Your job is to describe what you want. The system figures out how to get there.

That's real progress — if you already know how to describe what you want clearly. For everyone else, it's the same problem with a fancier engine.

I see this constantly in training sessions. People assume the bottleneck is the tool. It's not. The bottleneck is the spec.

If you tell Computer "build me a website," you'll get a website. It might not be the one you wanted. Wrong tone. Wrong structure. Wrong audience. You'll spend more time fixing it than if you'd written a clear brief first.

This is the Autocomplete Machine problem at scale. More agents, more orchestration, but the input still comes from you. Garbage in, garbage out. Even when the garbage is being processed by 19 models simultaneously.

Here's a trick I teach in sessions that makes the point. Say you want a deep research report on a topic. Most people open Perplexity or ChatGPT and type something vague: "research the AI agent market." They get back something generic and wonder why the tool isn't better.

Instead, I tell people: ask the AI to write the research prompt for you. Describe what you're after in plain English — the audience, the angle, what you'd use the output for — and let it produce a detailed, structured query.

That's the easy bit. The hard work is reading that prompt back and asking yourself: does this actually match what I want? Are the constraints right? Is it looking in the right places? If not, you iterate. Tighten it. Cut the bits that don't matter. Add the bits that do.

Then you run it. And the output is a different thing entirely. Not because the model got smarter between attempts. Because the spec got better.

That loop — describe, review, iterate, execute — is the same loop you'd use with Perplexity Computer. The 19 models don't save you from a bad brief. They just execute a bad brief faster.

The Managed vs. Local Decision

There's a real choice emerging here, and it's worth understanding.

OpenClaw runs on your machine. You control it. You see what it's doing. You also accept full responsibility when it sends 500 messages from your iMessage account because you gave it too much access.

Computer runs in Perplexity's cloud. You don't see the machinery. But there's a permission model — it pauses before sending emails, publishing content, or making purchases. High-stakes actions need your approval.

Neither approach is wrong. But they require different habits.

With local agents, you need technical confidence. You're the sysadmin.

With managed agents like Computer, you need something else: clear acceptance criteria. What does "done" look like? What should it never do? What data should it never see? These aren't technical questions. They're specification questions. And most people haven't thought about them.

My advice: before you hand anything to an AI agent — local or managed — write down three things.

- What "done" looks like. Not "build a website." More like "a 5-page site with these sections, this tone, linking to these three pages, no stock photos."

- What it should never touch. Your financial accounts. Client data. Anything with passwords. Be explicit.

- Where you want a checkpoint. Before it sends anything. Before it publishes anything. Before it spends anything. Don't let it run unsupervised on tasks that matter.

That checklist works whether you're using Perplexity Computer, OpenClaw, Claude Cowork, or any other agent tool that launches next month.

Where This Lands

The $200/month price tag puts most individuals off. Fair enough. The pattern matters more than this specific product.

AI tools are moving from "answer my question" to "do my job." That shift requires a different skill from users. Not prompting. Not technical setup. Specification. Knowing what you want, writing it down clearly, and building in checkpoints so you can catch problems before they compound.

That's the skill that transfers across every tool. Models will change. Products will launch and merge and die. The ability to write a clear brief and verify the output — that stays.

If you want to get good at this — at designing AI workflows with proper specs and verification loops, not just typing into a chat box — that's what I cover in a session.

Related Articles

Hermes Agent Goals, Background Tasks & Kanban Guide

A practical guide to Hermes Agent's autonomous features — persistent goals, background tasks, the Cu...

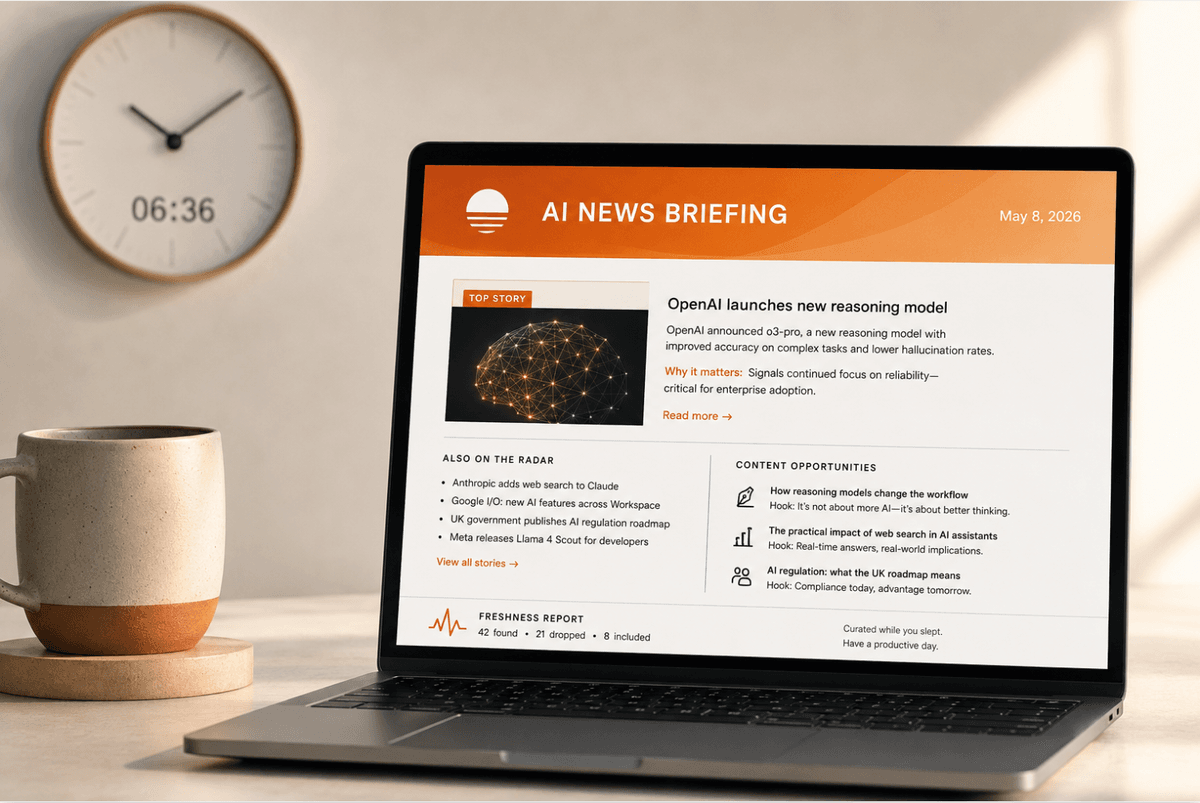

How to set up a daily AI briefing with Claude Cowork

How I built a daily AI briefing that searches, filters, and emails me a summary by 7am — using two s...

How I Actually Use Claude Cowork Every Day

How I use Claude Cowork to run my content engine, schedule AI agents, and manage my business. Real d...