Stop AI Hallucinations With This One Prompt Fix

By Riz Pabani on 03-Jun-2025

We've all been there: asking an AI a question and getting a confident-sounding answer that's completely wrong. Unlike humans, who naturally say "I'm not sure" when uncertain, large language models (LLMs) often feel compelled to generate something — even when they shouldn't.

The solution is surprisingly simple: explicitly give your AI permission to acknowledge uncertainty.

The Problem with Forced Answers

During a recent Anthropic prompt engineering roundtable, philosopher and AI researcher Amanda Askell highlighted a critical oversight in how most people interact with LLMs. Without clear instructions for handling unexpected situations, models will typically force an answer — even when the question is nonsensical or beyond their knowledge.

"If you don't give the model an option for what to do in unexpected situations, it will often try to force an answer — even when it shouldn't" Askell explains.

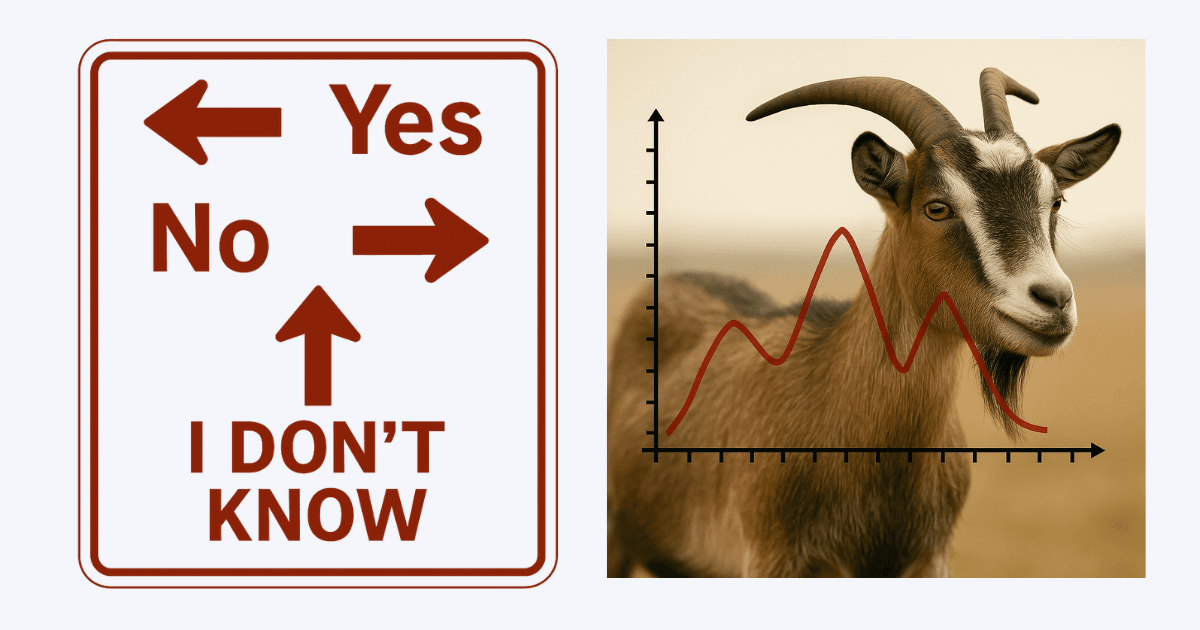

Her example is memorable: imagine asking an LLM to analyse a chart, but instead showing it a picture of a goat. Without specific guidance, the model might still attempt to find "chart-like" qualities in the goat image, producing absurd results rather than pointing out the obvious mismatch.

The Simple Fix: Build in an Escape Hatch

Askell recommends explicitly telling the model what to do when it encounters something outside its wheelhouse:

- "If you're unsure about the answer, respond with 'I don't have enough information to answer reliably.'"

- "If the question contains incorrect assumptions, point these out before attempting an answer."

- "When faced with ambiguous inputs, explain possible interpretations before proceeding."

- "If something weird happens and you're not sure what to do, just output

<unsure>."

This approach prevents hallucinations while improving data quality by surfacing problematic cases for review.

Beyond Individual Prompts: The "Complaint Field" Solution

Jared Friedman, Managing Partner at Y Combinator, has taken this concept further in their internal tooling, as discussed on a recent Y Combinator podcast. Instead of relying on users to craft perfect prompts, they build uncertainty handling directly into their LLM response format.

As Friedman explains, this means giving the model a dedicated space to report back when it encounters problematic prompts or lacks sufficient information:

"In the response format, we give it the ability to have part of the response be essentially a complaint to you, the developer — that you have given it confusing or underspecified information and it doesn't know what to do. We call it debug info internally… it literally ends up being like a to-do list that you, the agent developer, has to do. It's really kind of mind-blowing stuff."

This transforms the LLM from a simple response generator into an active collaborator in system improvement. The debug info surfaces edge cases and ambiguities that might otherwise lead to hallucinations.

Why This Matters

This isn't just about technical accuracy — it's about building AI systems with what Askell calls good "character." Systems that are wise, thoughtful, and honest about their limitations.

The benefits extend beyond preventing wrong answers:

- Increased trust when AI transparently acknowledges limitations

- Better collaboration built on realistic expectations

- Continuous improvement through systematic feedback loops

- Reduced hallucinations in production systems

The Philosophy Behind the Fix

Askell's approach reflects her broader philosophy about AI development. With her background in philosophy, she views prompt engineering as an exercise in developing AI with good character — systems that are careful and charitable rather than just clever.

Her testing philosophy embodies this: "I think I don't trust the model ever and then I just hammer on it." Rather than assuming reliability, she continuously probes for failure points.

Making It Practical

Whether you're crafting individual prompts or building production systems, the principle remains the same: give your AI explicit permission to acknowledge uncertainty. Sometimes the most helpful thing an AI can do is recognise when it can't help.

This represents a fundamental shift in how we approach human-AI interaction — from expecting perfect answers to building systems that know their limits and aren't afraid to admit them.

The next time you're frustrated by a confident but wrong AI answer, remember: you might just need to give it permission to say "I don't know."

Related Articles

ChatGPT in UK financial services: what the FCA changed

The FCA, Bank of England and HM Treasury just issued a frontier-AI warning. Here's what changes for ...

Hermes Agent Goals, Background Tasks & Kanban Guide

A practical guide to Hermes Agent's autonomous features — persistent goals, background tasks, the Cu...

How to set up a daily AI briefing with Claude Cowork

How I built a daily AI briefing that searches, filters, and emails me a summary by 7am — using two s...