Beyond Prompts: Why Context Engineering is AI's Future

By Riz Pabani on 07-Oct-2025

We've all heard of "prompt engineering" – the art of crafting the perfect question to get the desired response from an AI. But what if I told you that's only half the story? To build truly intelligent and reliable AI systems, we need to look beyond the prompt and into the world of context engineering.

Imagine you have an AI travel agent. You ask it to book a hotel in Paris for a conference. It books you a room at the Best Western... in Paris, Kentucky. The conference, of course, is in Paris, France. Was this a failure of your prompt? Or a failure of the AI to understand the context?

This is where the distinction between prompt engineering and context engineering becomes crucial.

What is Prompt Engineering?

Prompt engineering is the foundation of our interaction with large language models (LLMs). It's about carefully crafting our input to guide the AI's output. Think of it as giving the AI specific instructions. Some of the most effective techniques include:

Role Assignment: Tell the AI who to be. "You are a senior software developer" will yield a very different response than a generic request.

Few-Shot Examples: Show, don't just tell. Providing a few examples of what you want helps the AI understand the desired format and style.

Chain of Thought (CoT): Encourage the AI to "think step-by-step." This is especially useful for complex problems, as it forces the AI to lay out its reasoning.

Constraint Setting: Set clear boundaries. "Limit your response to 100 words" or "Only use the information I've provided" can help keep the AI focused.

The Bigger Picture: Context Engineering

If prompt engineering is about the question, context engineering is about the entire conversation. It's the broader discipline of providing the AI with everything it needs to understand the world around the prompt. This includes:

Memory Management: Just like humans, AI needs both short-term memory (to remember the current conversation) and long-term memory (to recall past interactions and preferences).

State Management: For multi-step tasks, the AI needs to keep track of where it is in the process. Did the flight booking succeed? What time does the rental car need to be ready?

Retrieval Augmented Generation (RAG): This allows the AI to access and retrieve information from external sources, like a company's travel policy or a real-time flight schedule.

Tools: LLMs can't browse the internet or book a hotel on their own. Context engineering gives them the "tools" to interact with the outside world through APIs and other integrations.

Better Questions vs. Better Systems

Ultimately, prompt engineering gives you better questions, while context engineering gives you better systems. When you combine them, you get an AI that can not only understand your request but also the context surrounding it.

So, the next time you're frustrated with an AI's response, remember that the problem might not be the prompt itself, but the lack of a richer context. The future of AI lies in building systems that can understand not just what we say, but what we mean.

This blog post is based on the concepts explained in the IBM Technology video: "Context Engineering vs. Prompt Engineering: Smarter AI with RAG & Agents"

Related Articles

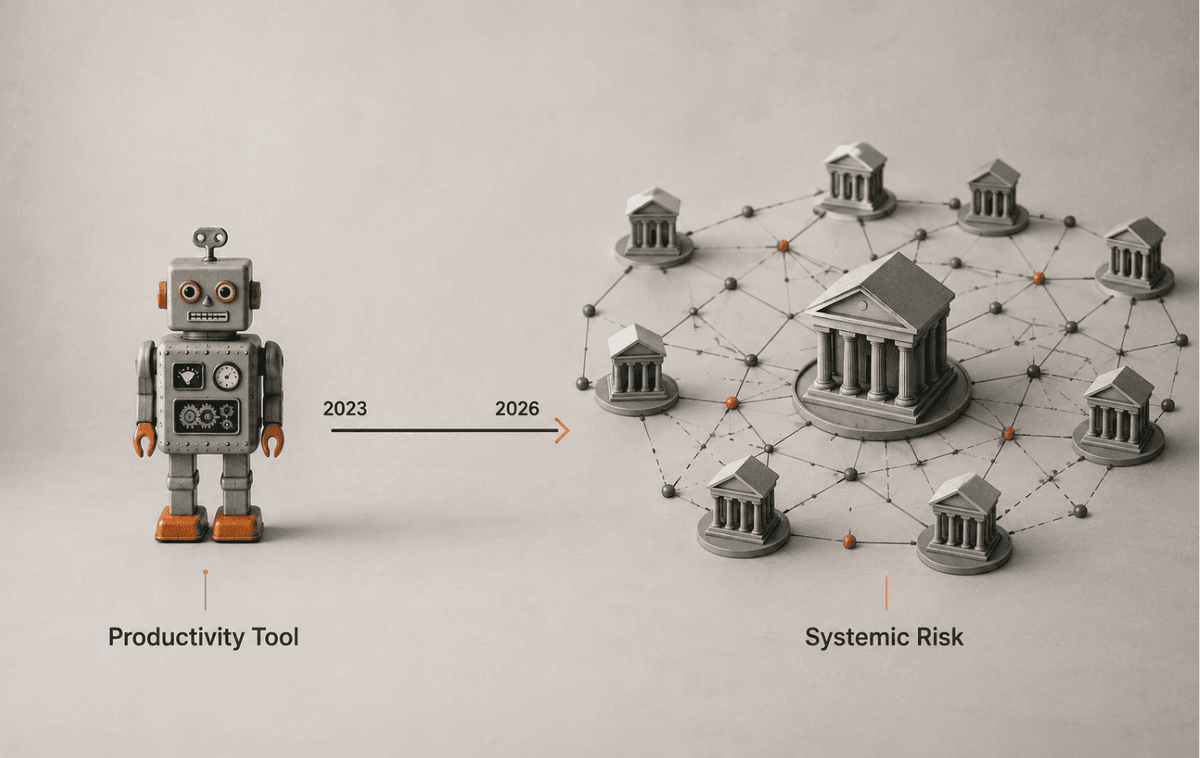

ChatGPT in UK financial services: what the FCA changed

The FCA, Bank of England and HM Treasury just issued a frontier-AI warning. Here's what changes for ...

Hermes Agent Goals, Background Tasks & Kanban Guide

A practical guide to Hermes Agent's autonomous features — persistent goals, background tasks, the Cu...

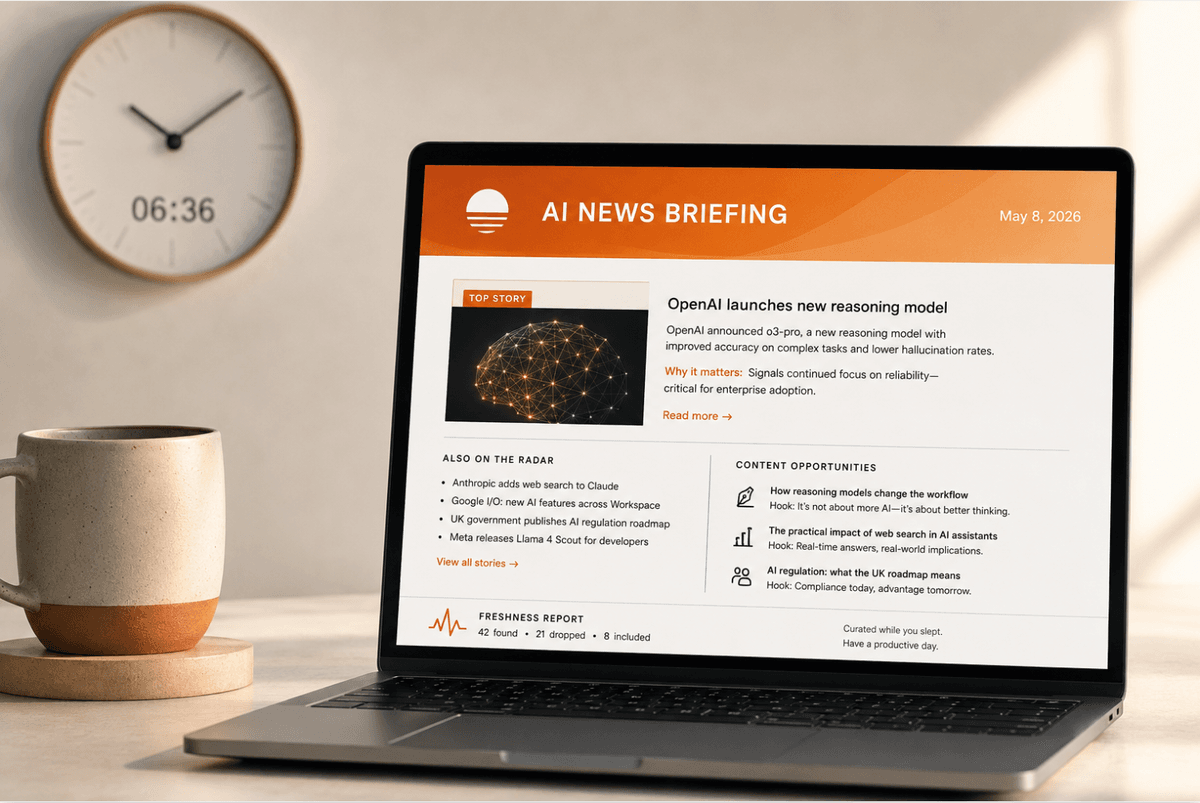

How to set up a daily AI briefing with Claude Cowork

How I built a daily AI briefing that searches, filters, and emails me a summary by 7am — using two s...