How to Pick the Right Model for the Right Task

By Riz Pabani on 28-Jul-2025

There's no one-size-fits-all approach to using large language models like ChatGPT. Depending on your goal - whether it's answering a quick question, creating an infographic, or conducting deep research - different models excel at different tasks.

Here are six key approaches to consider, each with their own strengths:

1. Quick Answers

For rapid-fire questions throughout the day, I rely on Grok in Fast mode. It's lightning-fast and has become my most-used mobile app when I'm on the go. Perfect for those moments when you need information immediately without the wait.

I also like to use the faster models for building prompts. My favourite command is "write me a prompt for this task:".

2. Reasoning Models

The world's best AI models are currently reasoning models. Unlike standard models that generate responses linearly, these actually "think through" problems - breaking down complex questions, weighing trade-offs, and considering multiple approaches before responding.

I use reasoning models (GPT-o3, Gemini 2.5 Pro, Claude 4) when I need genuine strategic thinking - product roadmap decisions, competitive positioning analysis, or solving technical architecture challenges. They excel at problems where the obvious first answer isn't necessarily the best one, making the extra wait time worthwhile for complex business decisions.

3. Tool Use

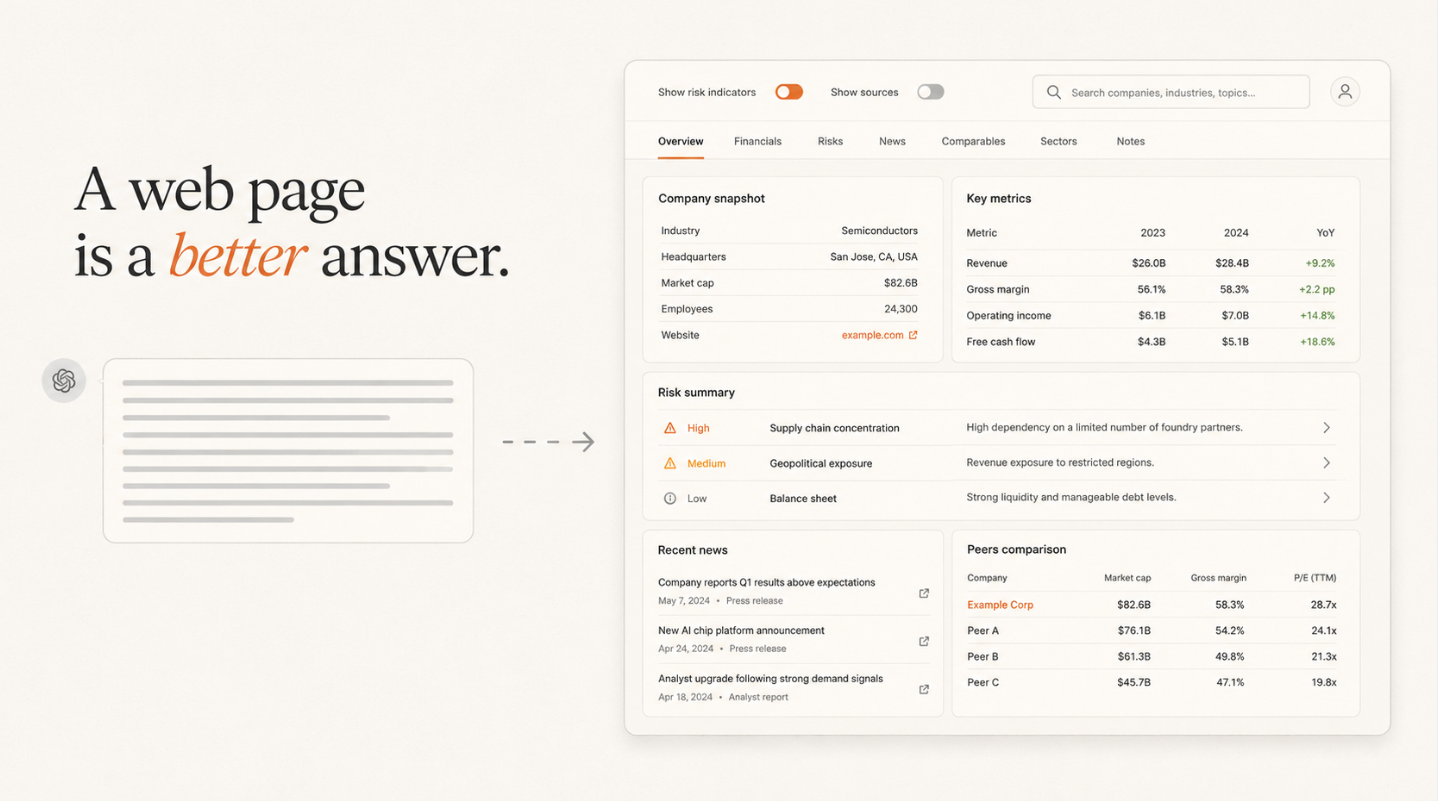

Modern AI models increasingly offer tool integration, making them feel like they're operating behind a terminal. Many now include browser capabilities, allowing the AI to rapidly read multiple websites and synthesise information relevant to your query.

These tools typically include code execution environments for calculations and algorithms, web browsing for real-time information gathering, and file processing capabilities for documents, spreadsheets, and images. More advanced implementations can integrate directly with business systems - connecting to databases, CRM platforms, project management tools, and cloud services. This transforms the AI from a simple chat interface into a comprehensive digital workspace that can orchestrate complex workflows across multiple platforms.

4. Deep Research

When exploring new topics, LLMs with web access (like Perplexity or Grok) compress hours of research into minutes. They simultaneously search multiple sources, cross-reference claims, and deliver synthesized insights with citations - like having a research assistant read 20 articles and brief you on the key findings.

ChatGPT's strength lies in refining research through follow-up questions, while Perplexity excels at source credibility and real-time information synthesis. The result: comprehensive understanding without the typical research rabbit holes.

5. Agents & Agentic Workflow

Agents are AI systems that can take a business goal and figure out how to achieve it autonomously. Instead of just responding to questions, they plan, execute, and adapt - essentially functioning as digital employees for specific tasks.

The breakthrough is in agentic workflows where multiple AI agents work together across different business functions. One agent might monitor your industry for trends, another creates content based on those insights, while a third schedules and distributes that content across platforms. Each agent makes decisions within its domain, but they coordinate to achieve larger business objectives.

Tools like n8n and Zapier are becoming orchestration platforms for these multi-agent systems, enabling businesses to automate entire processes - from lead generation to customer onboarding - with AI agents handling the decision-making at each step.

6. Fine-tuning & Post-Training

One of the most powerful LLM applications is training AI on your specific business data to predict outcomes and automate decisions. This goes beyond general AI assistance to create systems that understand your unique processes, customer patterns, and business logic.

Klarna demonstrates this perfectly. They trained an AI system on thousands of customer service interactions - not just the conversations, but which approaches led to successful resolutions. Now their AI can handle routine queries and intelligently escalate cases it recognizes as complex based on learned patterns.

This approach typically involves taking open-source models like Meta's Llama and training them on your proprietary data, then validating performance against real-world scenarios. The result is AI that understands your business context, terminology, and successful resolution patterns better than any general model could.

Bonus: Voice Interaction

One of my favorite outcomes from the AI evolution has been the transformation of voice interaction. People now have full conversations about relationships, work challenges, guided meditation, and children's story time.

My preferred use case is learning. I can take a 30-minute walk with headphones and truly understand complex topics like the transformer architecture underlying LLMs. I can ask unlimited questions at different complexity levels, only progressing when I feel ready. It's like speaking with the most patient and knowledgeable teacher imaginable.

ChatGPT's voice implementation is remarkable - my kids absolutely love story time with it too.

The Key Takeaway

The key isn't choosing one approach over another - it's understanding when each tool serves you best. Start with quick models for daily questions, escalate to reasoning models for important decisions, and explore agents for repetitive workflows. As AI capabilities expand rapidly, the businesses that thrive will be those that match the right tool to each specific challenge.

Related Articles

How to read a financial filing with AI

How to read a financial filing with AI: I turned SpaceX's 12MB IPO S-1 into a page anyone can skim —...

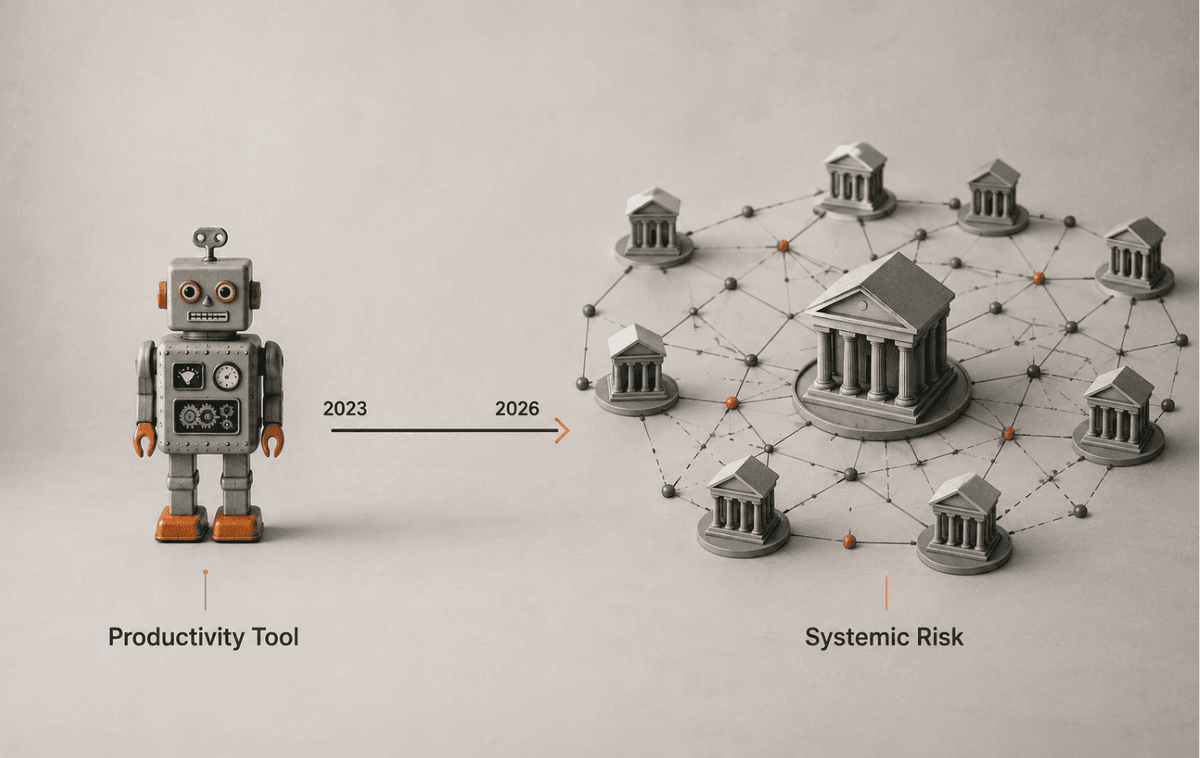

ChatGPT in UK financial services: what the FCA changed

The FCA, Bank of England and HM Treasury just issued a frontier-AI warning. Here's what changes for ...

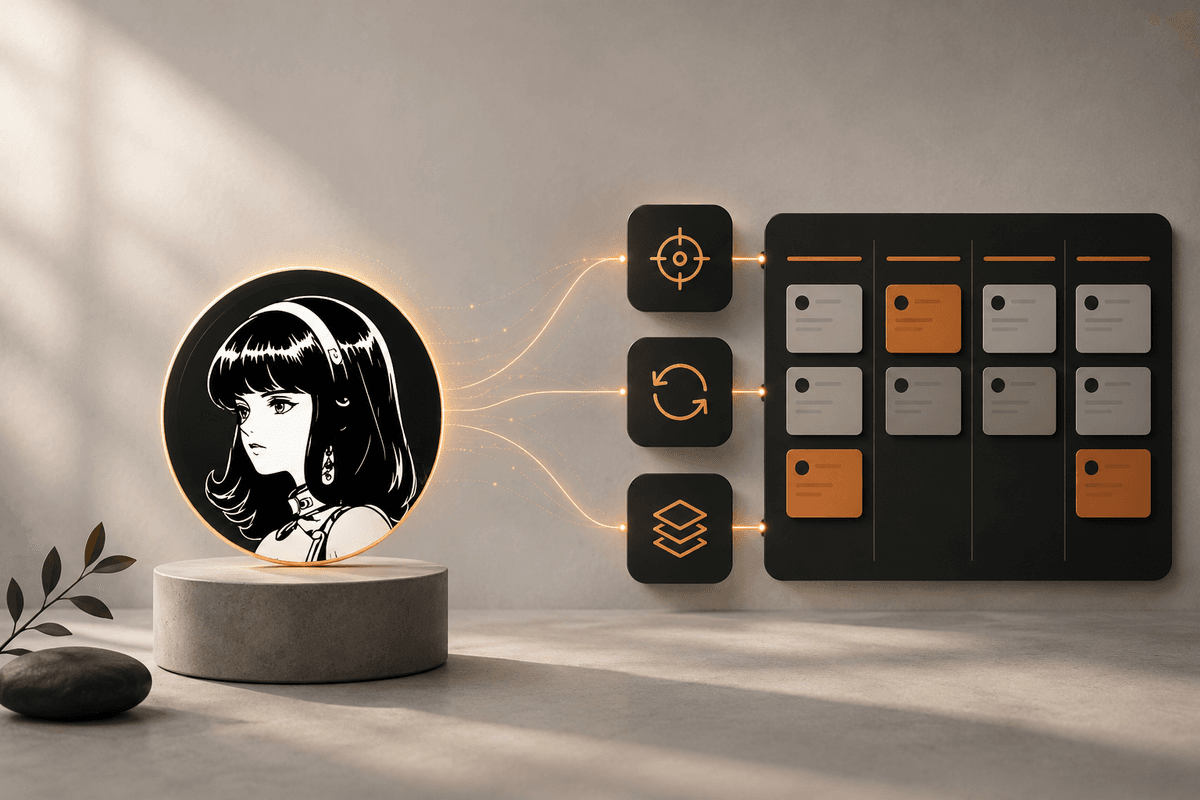

Hermes Agent Goals, Background Tasks & Kanban Guide

A practical guide to Hermes Agent's autonomous features — persistent goals, background tasks, the Cu...