Why AI Pioneer Calls LLMs 'People Spirits' in Computers

By Riz Pabani on 20-Jun-2025

Key takeaways:

- LLMs have superhuman encyclopaedic memory but also "cognitive deficits" — they hallucinate, have jagged intelligence, and reset every conversation

- Karpathy argues for human-AI cooperation rather than full automation, with an "autonomy slider" approach

- The bottleneck isn't AI generation speed — it's human verification speed

Andrej Karpathy, former Director of AI at Tesla who helped build their self-driving technology, recently presented at YC's AI Start-up School.

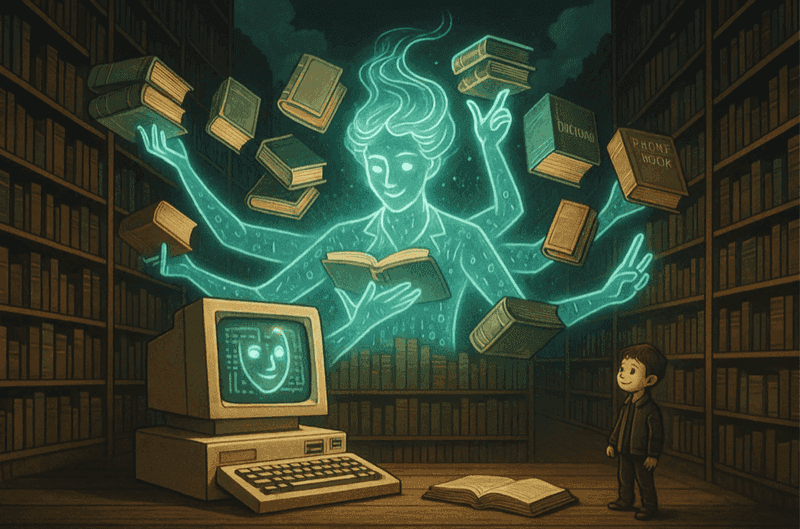

He talked about how AI isn't just software but like 'people spirits'. All of the training we do of transformer architectures — the bones of LLMs like ChatGPT — ends up creating these machines with human-like psychology.

AI feels superhuman with its encyclopaedic knowledge. Karpathy references the 1988 film Rain Man, where Dustin Hoffman's character has an almost perfect memory, able to read a phone book and remember all the names and numbers. "I kind of feel like LLMs are very similar," Karpathy notes, highlighting how they can instantly recall vast amounts of information with startling accuracy.

The analogy hit me because it captures something I've been feeling but couldn't articulate. Sure, I've had dictionaries, Wikipedia, and Google at my fingertips for years, but interacting with an LLM feels fundamentally different. It's like having that Rain Man-level memory available for conversation — I can reference an obscure paper from the 1980s, ask about a specific line from Shakespeare, and dive into quantum physics, all in the same chat. The knowledge isn't just accessible; it's conversational.

But here's where things get unsettling. Despite their superhuman memory, LLMs have what Karpathy calls "cognitive deficits" that would be deeply problematic in any human colleague.

Hallucinate with confidence

AI doesn't just make mistakes — it fabricates entire citations, creates fake research papers, and presents fiction as fact, all while sounding completely authoritative. It's like working with someone who confidently tells you elaborate lies without realising they're lying.

Jagged intelligence

This might be the most disturbing aspect. LLMs can write sophisticated code or analyse complex literature, then confidently insist that 9.11 is greater than 9.9 or struggle to count the letter "R" in "strawberry." These are, as Karpathy says, "mistakes that basically no human will make" — errors that reveal fundamental gaps in understanding that we're only beginning to map.

Digital amnesia

Karpathy uses a haunting analogy from the movies Memento and 50 First Dates, where protagonists wake up each day with wiped memories. "Their weights are fixed and their context windows get wiped every single morning," he explains. "It's really problematic to go to work or have relationships when this happens." Unlike a human coworker who gradually learns your organisation and builds expertise, AI starts fresh every conversation — permanently stuck in a kind of digital groundhog day.

Surprisingly gullible

You can convince most LLMs they're wrong about basic facts with enough persistence. While endearing in its eagerness to please, this makes them vulnerable to manipulation and prompt-injection attacks.

Karpathy's framework reveals why we're dealing with something unprecedented: "You have to simultaneously think through this superhuman thing that has a bunch of cognitive deficits and issues… how do we program them and work around their deficits and enjoy their superhuman powers?"

This brings us to what Karpathy sees as the real opportunity: human-AI co-operation, not replacement. "We're now kind of like co-operating with AIs," he explains, "and usually they are doing the generation and we as humans are doing the verification."

This isn't just a temporary workaround — it's a fundamental design principle. Karpathy argues we should be building "partial autonomy" systems with what he calls an "autonomy slider." Think of tools like Cursor for coding, where you can choose minimal AI assistance (tab completion) or let it rewrite entire files, depending on your comfort level and the task complexity.

The key insight? We need to optimise for speed of verification, not just generation. As Karpathy puts it: "It's not useful to me to get a diff of 10,000 lines of code to my repo… I'm still the bottleneck." The AI might generate code instantly, but if it takes me hours to review and debug, we've gained nothing.

Rather than dreaming of fully autonomous agents, we should focus on building what Karpathy calls "Iron Man suits" instead of "Iron Man robots" — systems that augment human capability while keeping humans firmly in control. Given that even Tesla's self-driving technology took over a decade to develop, perhaps we should approach AI automation with similar patience and rigour.

As AI continues to evolve, Karpathy's framework offers a roadmap: understand the psychology, design for co-operation, and build systems that make the most of both human and artificial intelligence. The future isn't about replacing humans — it's about creating better partnerships with these fascinating, flawed digital minds.

Related Articles

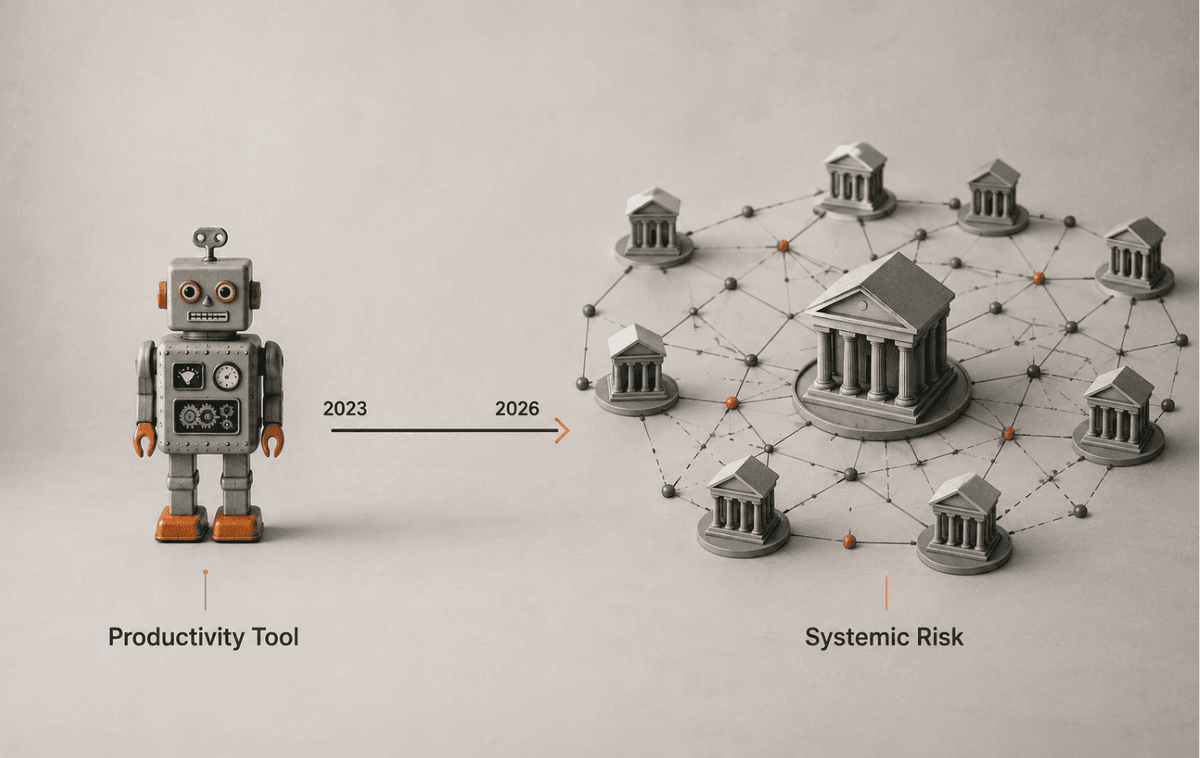

ChatGPT in UK financial services: what the FCA changed

The FCA, Bank of England and HM Treasury just issued a frontier-AI warning. Here's what changes for ...

Hermes Agent Goals, Background Tasks & Kanban Guide

A practical guide to Hermes Agent's autonomous features — persistent goals, background tasks, the Cu...

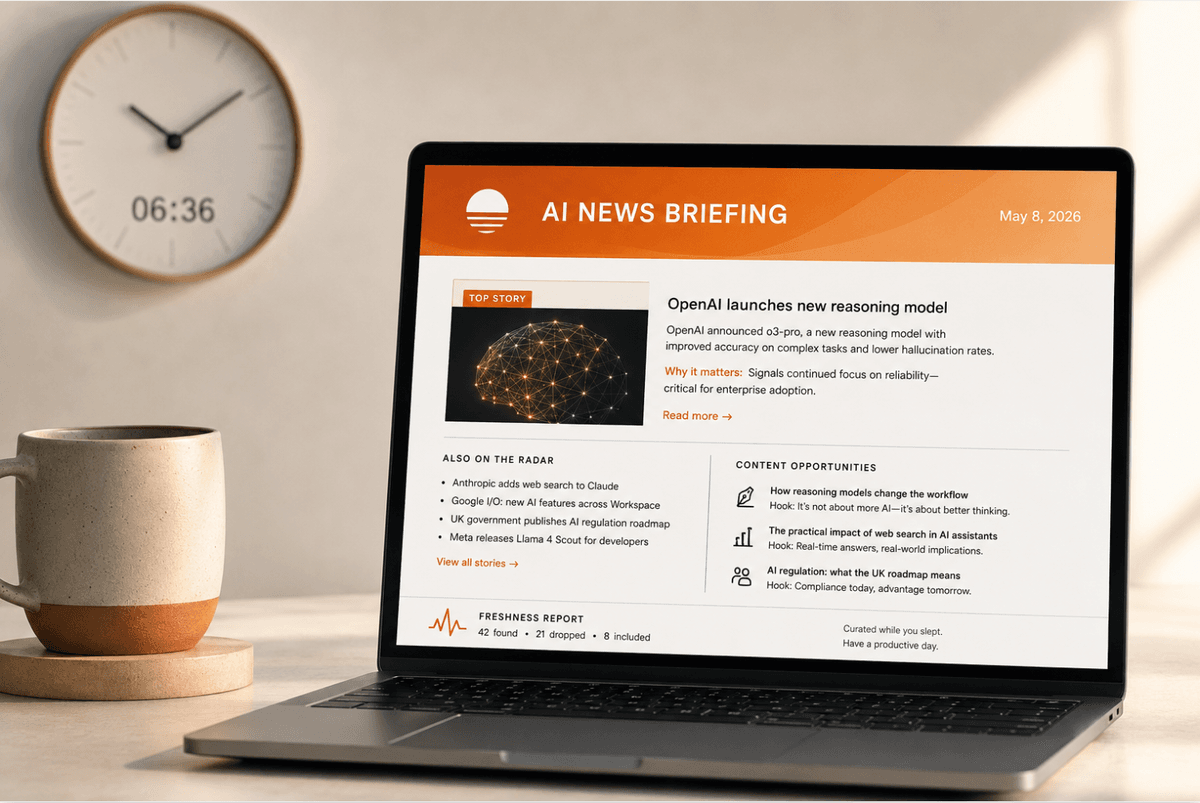

How to set up a daily AI briefing with Claude Cowork

How I built a daily AI briefing that searches, filters, and emails me a summary by 7am — using two s...